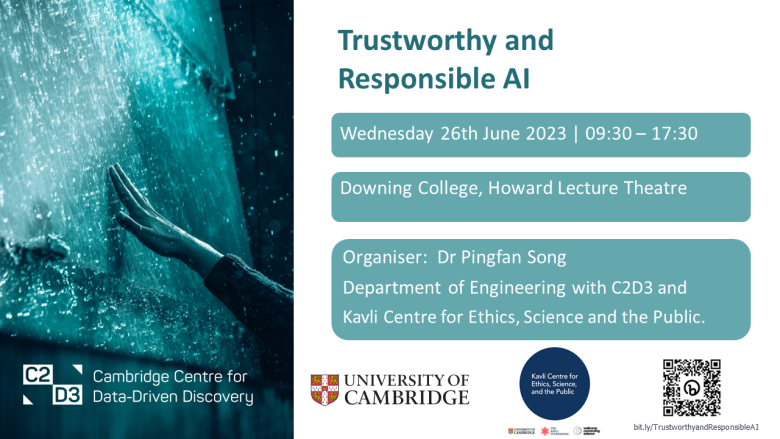

Mon, 26 Jun 2023 9:30 AM - 5:30 PM

Memoirs of the Trustworthy and Responsible AI Conference at Cambridge

Trustworthy and Responsible AI Conference was successfully held at the Downing College of Cambridge University on June 26, 2023.

Relive the inspiring keynote talks, insightful panel discussions, vibrant networking sessions, delightful drink and formal dinner. Experience the energy and passion of the participants as they shared their insights, research findings, and visions for a trustworthy and responsible AI future.

This short video takes a captivating journey through the highlights and memorable moments of the conference, offering a glimpse into the intellectually stimulating atmosphere that permeated every corner. Access to the photographs can be found here.

Conference Details

Principal Partner : Kavli Centre for Ethics, Science and the Public

Other supporting partners : AI@Cam, Bitfount, Centre for Human-Inspired Artificial Intelligence (CHIA) and Cambridge Global Consulting Ltd (CGC).

Artificial Intelligence (AI) systems are increasingly being deployed in society and generating a profound impact on our daily lives. Despite the various advantages of these AI systems, it is also necessary to prevent their direct and indirect potential harm and risks to the users and society. Therefore, it is critical to ensure that these AI systems are trustworthy, responsible, safe and ethical, in particular in high-stake real-world applications. In the meanwhile, research-users are highly recommended to use AI systems with wisdom, prudence and integrity.

Trustworthy and responsible AI has been gaining significant attention from the government, industry and scientific communities. This conference will bring together speakers, delegates and research-users with diverse backgrounds, such as industry leaders, policymakers, government officials and frontier academic researchers and data scientists, to share cutting-edge knowledge, inspiring findings and views in this timely and trending domain. Such an occasion will facilitate knowledge dissemination with a wide range of research-users, help identification of challenges and mutual strengths, create opportunities to build and strengthen partnerships and collaborations, take the conversation further through highly interactive discussion and networking activities.

Posters

Poster registration now closed.

Registrations

Delegate registration now closed. This is event is full, please only attend if you have received a confirmation email.

Programme

09.30-09.45 Registration and arrival refreshments

09:45-10.00 Opening and Introductions

10.00–11:30 Session 1: Chair - Dr Lefan Wang

Keynote talks:

- Prof. Zoe Kourtzi - Robust and interpretable AI-guided tools for early dementia prediction

- Dr David Krueger - Baby Steps Towards Safe and Trustworthy AI in Large Scale Deep Learning

- Dr Richard Milne - AI, trust and the public

11.30-11.50 Break with refreshments

11:50-12:30 Session 1 Panel Discussion : Chair - Dr Richard Milne

Challenges and issues in existing AI Systems: opaqueness, bias, fragility, privacy invasion, inefficiency, lack of ethical guidelines,..

- Prof. Alexandra Brintrup, Prof. Helena Earl, Dr David Krueger, Dr Richard Milne and Dr Sebastian Pattinson

12:30-13.30 Lunch

13.30-15.30 Session 2: Chair - Dr. Vihari Piratla

Keynote talks:

- Prof. Alessandro Abate - Certified learning, or learning for verification?

- Dr Lucas Dixon - Large Language Models, Prototypes & Responsibility

- Prof. Miguel Rodrigues - Fair Federated Learning

- Dr Blaise Thomson - How the real world impacts the use of trustworthy & responsible AI

15.30-15.50 Break with refreshments

15.50-16.30 Session 2 Panel Discussion : Chair - Dr. Carolyn Ashurst

Measures and Techniques to Achieve Trustworthy and Responsible AI - Enhancing transparency, interpretability, fairness, robustness, privacy protection, efficiency, regulation and accountability, Human-AI collaboration, Continual Monitoring,...

- Prof. Alessandro Abate, Dr. Carolyn Ashurst, Dr Lucas Dixon , Prof. Miguel Rodrigues, Dr Blaise Thomson and Dr Miri Zilka

16.30-16.40 Wrap up and thank you

16.40-17.30 Session 3: Poster session and networking

Watch the Sessions

Speakers, Chairs and Panellists

Prof. Alessandro Abate, University of Oxford

Alessandro Abate is Professor of Verification and Control in the Department of Computer Science at the University of Oxford, where he is also Deputy Head of Department. Earlier, he did research at Stanford University and at SRI International, and was an Assistant Professor at the Delft Center for Systems and Control, TU Delft. He received an MS/PhD from the University of Padova and UC Berkeley. His research interests lie on the formal verification and control of stochastic hybrid systems, and in their applications in cyber-physical systems, particularly involving safety criticality and energy. He blends in techniques from machine learning and AI, such as Bayesian inference, reinforcement learning, and game theory.

Dr. Carolyn Ashurst, Alan Turing Institute

Carolyn is a Turing Research Fellow in Safe and Ethical AI at the Alan Turing Institute, the UK’s national centre for data science and AI. Her work is motivated by the question: How do we ensure AI and other digital technologies are researched, developed and used responsibly? Her research into algorithmic fairness seeks to understand the fairness implications of data-driven systems from both theoretical, practical and domain specific lenses. As well as mitigating the impacts from deployed systems, her work in responsible research seeks to understand the role of the machine learning (ML) research community in navigating the broader impacts of ML research. Carolyn also works to convene technical, policy, and domain experts, and sits on a range of advisory boards and working groups, including with the FBI, CSIS, ICO, PAI, OEDC and the Turing Research Ethics process.

Prof. Alexandra Brintrup, University of Cambridge

Alexandra is Professor in Digital Manufacturing and is leading the Supply Chain Artificial Intelligence Lab. She is a fellow of Darwin College.

Having trained as a manufacturing systems engineer and then embarking on a research career in Artificial Intelligence, she is fascinated by the merger of the two. Her research interests include:

-Predictive Data Analytics, especially for predicting and handling uncertainty in supply chains and other emergent manufacturing systems.

-Development of automated and scalable optimisation and distributed decision making technologies for Supply Chain Management and Logistics, particularly with nature-inspired algorithms and Multi-agent Systems.

-Identification of emergent patterns in industrial systems, particularly in relation to robustness, resilience and quality outcomes

Dr Lucas Dixon, Lead of People and AI Research (PAIR)

Lucas is a research scientist and lead of PAIR (People and AI Research). He works on visualisation, explainability and control of machine learning systems, and specifically language models. His work explores how people can productively and fairly benefit from machine learning systems. Previously, he was Chief Scientist at Jigsaw where he founded engineering and research. He has worked on range of topics including security, formal logics, machine learning, and data visualization. For example he worked on, uProxy & Outline, Project Shield, DigitalAttackMap; Syria Defection Tracker, unfiltered.news, Conversation AI and the Perspective API. Before Google, Lucas completed his PhD and worked at the University of Edinburgh on the automation of mathematical reasoning and graphical languages mostly applied to quantum information. He also helped run a non-profit working towards more rational and informed discussion and decision making, and was a co-founder of TheoryMine - a playful take on automating mathematical discovery.

Prof. Helena Earl

Helena has extensive experience in Clinical and Translational Research in Breast and Gynaecological Cancers with the Aim of Individualising Cancer Treatments for Patients.

Prof. Zoe Kourtzi, University of Cambridge

Zoe Kourtzi is Professor of Computational Cognitive Neuroscience at the University of Cambridge. Her research aims to develop predictive AI-guided models of neurodegenerative disease and mental health with translational impact in early diagnosis and personalised interventions. Kourtzi received her PhD from Rutgers University and was postdoctoral fellow at MIT and Harvard. She was a Senior Research Scientist at the Max Planck Institute for Biological Cybernetics and then a Chair in Brain Imaging at the University of Birmingham, before moving to the University of Cambridge in 2013. She is a Royal Society Industry Fellow, Fellow and Cambridge University Lead at the Alan Turing Institute, and the Scientific Director for Alzheimer's Research UK Initiative on Early Detection of Neurodegenerative Diseases (EDoN).

Prof. David Krueger, University of Cambridge

I am an Assistant Professor at the University of Cambridge and a member of Cambridge's Computational and Biological Learning lab (CBL) and Machine Learning Group (MLG). My research group focuses on Deep Learning, AI Alignment, and AI safety. I’m broadly interested in work (including in areas outside of Machine Learning, e.g. AI governance) that could reduce the risk of human extinction (“x-risk”) resulting from out-of-control AI systems. Particular interests include:

- Reward modeling and reward gaming

- Aligning foundation models

- Understanding learning and generalization in deep learning and foundation models, especially via “empirical theory” approaches

- Preventing the development and deployment of socially harmful AI systems

- Elaborating and evaluating speculative concerns about more advanced future AI systems

Dr. Richard Milne, Kavli Centre for Ethics, Science and the Public

I am a social scientist whose work addresses social and ethical challenges associated with new science and technology and the relationship between science and the public, primarily in the domains of genomics and the development of data-driven medicine. I am based in the Kavli Centre for Ethics, Science, and the Public in the Faculty of Education and in the Engagement and Society Group at Wellcome Connecting Science, which is located alongside the Sanger Institute outside Cambridge. In Cambridge, I also co-lead the 'Ethics, Law and Society' strand of Cambridge Public Health.

Dr. Sebastian Pattinson, University of Cambridge

Sebastian Pattinson is a University Lecturer in the Department of Engineering at the University of Cambridge developing 3D printed medical devices and learning 3D printing systems. Before joining the Department, Sebastian was a postdoctoral fellow in the Department of Mechanical Engineering at MIT where he developed 1) new additively manufactured devices whose structure and composition are designed to improve interaction with the human body 2) scalable and sustainable methods for 3D printing cellulose, the world's most abundant organic polymer. He received Ph.D. and Masters degrees in the Department of Materials Science & Metallurgy at the University of Cambridge, where he developed synthesis methods to control the structure and function of nanomaterials. His awards include a UK Academy of Medical Sciences Springboard award; US National Science Foundation postdoctoral fellowship; UK Engineering and Physical Sciences Research Council Doctoral Training Grant; MIT Translational Fellowship; and a (Google) X Moonshot Fellowship.

Prof. Miguel Rodrigues, University College London

Miguel Rodrigues is a Professor of Information Theory and Processing at University College London; he leads the Information, Inference and Machine Learning Lab at UCL; and he has also led the MSc in Integrated Machine Learning Systems at UCL. He is the UCL Turing Liaison (Academic) and he is also a Turing Fellow.

He held various appointments with various institutions worldwide including Cambridge University, Princeton University, Duke University, and the University of Porto, Portugal. He obtained the undergraduate degree in Electrical and Computer Engineering from the Faculty of Engineering of the University of Porto, Portugal and the PhD degree in Electronic and Electrical Engineering from University College London.

Prof. Rodrigues’s current research concentrates on the foundations and applications of machine learning. He is a Fellow of the IEEE.

Blaise Thomson, Founder and CEO of Bitfount

Blaise Thomson is the founder and CEO of Bitfount, a federated machine learning and analytics platform. He was the founder and CEO of VocalIQ, which he sold to Apple in 2015, subsequently leading their Cambridge, UK engineering office and holding the role of Chief Architect for Siri Understanding. Blaise holds a PhD in Computer Science from the University of Cambridge, where he was also a Research Fellow, and is an Honorary Fellow at the Cambridge Judge Business School.

Dr Miri Zilka, University of Cambridge

Miri is a Leverhulme Research Fellow in the Machine Learning Group at the University of Cambridge, a College Research Associate at King’s College Cambridge, and an Associate Fellow at the Leverhulme Centre for the Future of Intelligence. She works on Trustworthy Machine Learning, with a focus on the deployment of algorithmic tools in criminal justice. As part of her Leverhulme fellowship, she is developing a human-centric framework for evaluating and mitigating risk in causal models.

Before Cambridge, Miri was a Research Fellow in Machine Learning at the University of Sussex, focusing on fairness, equality, and access. She obtained a PhD in Analytical Science (Physics) from the University of Warwick, hold an M.Sc. in Physics, and a dual B.Sc. in Physics and Biology from Tel Aviv University.

Organisers

Dr. Pingfan Song

Dr. Pingfan Song is a senior research associate with the Machine Learning group of the Department of Engineering at the University of Cambridge. His research focuses on trustworthy machine learning, signal / image processing, sparse modeling and sampling theory. His research has been applied to multi-disciplinary fields such as medical imaging, biological imaging, and other computational imaging tasks and inverse problems. He is endorsed under the Global Talent by UK Research and Innovation (UKRI). He is a member of the steering committee of Cambridge Centre for Data-Driven Discovery (C2D3), one of the winners of the C2D3 Early Career Research Seed Fund 2022, Impact and Knowledge Exchange grant 2023 from HEIF (Higher Education Innovation Fund). http://www.eng.cam.ac.uk/profiles/ps898

Dr Vihari Piratla, University of Cambridge

Vihari Piratla a postdoc with the Machine Learning Group of Cambridge University, supervised by Dr Adrian Weller. From 2017-2022, he was a PhD student with the Computer Science department of IIT Bombay. He is passionate about research challenges that arise when deploying Machine Learning systems in the wild. He was awarded Google PhD Fellowship in 2020.

Dr Lefan Wang, University of Cambridge

Lefan Wang has joined the ifM as a Research Associate in the Complex Additive Materials Group. Before this, she worked as a postdoc researcher in the Mechanical Engineering at the University of Leeds. She received her PhD degree at the Cardiff University in 2018. Her research interests include advanced sensing techniques, mobile medical/healthcare systems, additive manufacturing, and data analysis based on machine learning.

C2D3 team: Lisa Curtis, Ellen Ashmore Marsh